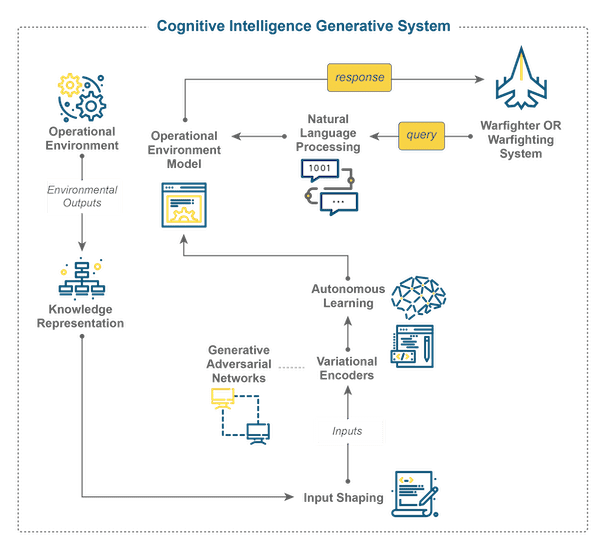

Recently, the DoD announced the establishment of a task force that is specifically designed to develop military applications for generative artificial intelligence (GI), one of many important AI concepts that have recently generated increased interest. This task force, known as Task Force Lima, is designed to advance the capabilities of both the human and robotic warfighter in the All-Domain Operational Environment (OE). Sentient Digital stays at the forefront of emerging technologies, such as cognitive intelligence and machine learning, by developing tools that increase the capabilities of our warfighters on both land and sea, including the many useful military applications of artificial intelligence. In the same vein, Sentient Digital has begun a new area of research and development combining cognitive intelligence and generative AI. This article will explain the basics of cognitive intelligence and generative AI, as well as the exciting potential of combining them.

What is Artificial Cognitive Intelligence?

We generally characterize cognitive intelligence as the processes that humans use to acquire and make use of information. The human cognitive process involves thinking and reasoning, about their environment and using the results of that to make decisions. Artificial Cognitive Intelligence (CI) is a subfield of artificial intelligence (AI) that creates a software agent to emulate the way humans’ reason. Unlike AI as a whole, CI specifically focuses on giving the software agent a broader range of functions, which can conduct perception, reasoning, learning, model-building, and problem-solving. Simply, a system which uses a CI form of intelligence, is one which can build a sophisticated model of its environment rather than just perform computation and decision-making based on data received from the environment. This ability gives the CI system a mechanism to respond to environmental input, with rules created from those inputs, as they occur rather than having to be trained to respond to them beforehand.

Components of Cognitive Intelligence and Generative Intelligence, Key AI Concepts

At the core of creating a cognitive intelligence and generative intelligence (CIGI) system are four key components:

- Natural Language Processing

- Knowledge Representation

- Generative Adversarial Networks

- Variational Autoencoders

A foundational understanding of these key AI concepts is key to keeping up with recent developments and Sentient Digital’s work.

Natural Language Processing (NLP)

Natural Language Processing (NLP)

Natural Language Processing (NLP) is a subfield of artificial intelligence that is designed to provide an algorithmic understanding of how to interpret, and generate coherent, contextual human language. NLP’s purpose is to allow machines to interact with humans in the primary way humans interact with each other–words. While it began in the mid-twentieth century with linguistic rules programmed into computers, NLP has increased in capabilities since it began to leverage machine learning, and, more recently, the self-supervised learning that is one of the core AI concepts behind generative AI.

At the heart of NLP are language models, which are mathematical representations of a language that allow systems to “understand” human language and to replicate it in new configurations. These models incorporate the patterns and definitions of the language and provide NLP-based systems a methodology (series of rules) for interpreting language inputs and generating response outputs. The key aspects of NLP are understanding language syntax, grammar, and context, as well as generating coherent, contextual-correct language.

|

Understanding Language Syntax, Grammar, and ContextNLP algorithms analyze and extract meaning from text documents. This can involve tasks such as part-of-speech tagging (identifying nouns, verbs, adjectives, etc.), named entity recognition (identifying names of people, places, organizations), and syntactic parsing (determining the grammatical structure of a sentence). Additionally, while NLP seeks to understand the definition of words, it must do so in the context in which they are used. Most languages have multiple meanings for the same word and the actual meaning is derived from the words that surround it. Importantly, for the warfighter, this allows the system to both understand and respond to the warfighter’s queries. Generating Coherent, Contextually-Correct LanguageNLP involves generating human-like language and thus, concurrently, must be able to translate one language to another. This is most often used for text summarization, human-prompted content generation, and development of chatbots to interact directly with humans. For the warfighter, this allows the continuation of conversations between pauses by utilizing the system’s ability to summarize previously discussed/queried information. |

Knowledge Representation

Knowledge Representation

Knowledge Representation is the systematic process of implementing structure, into information, using a hierarchical framework. Its objective is to enable computers to store, infer, and recall knowledge in a form that is understandable to both humans and computers. The key aspects of knowledge representation are the development of subject base knowledge and subject ontologies.

Development of Subject Knowledge Base

Knowledge representation requires a defined knowledge base that is considered the acceptable facts and concepts about the specified domain. This knowledge base can be thought of as a repository that stores all the known representations of that domain’s terminology and expressions. This generally takes the form of books and papers written about the specific domain. For the warfighter, this takes the form of training manuals, technical manuals, and mission-specific information.

Development of Subject Ontologies

Ontologies are structures that define how entities and corresponding concepts relate to each other within some defined domain. This process is done through the establishment of associations between a concept and an instance of that concept. The process involves the development of logical statements that can apply logical operators (AND, OR, NOT, XOR) to formulate complex ideas. These ideas are then combined into a rule-based system for interpretation of new input. Additionally, frames of reference are used to differentiate between similar concepts that have similar but not the same set of attributes. For the warfighter, this allows the knowledge representation of military operations to understand both slang and acronyms as well as more formal terminology.

Generative Adversarial Networks

Generative Adversarial Networks

Generative Adversarial Networks (GANs) are a class of artificial intelligence models that consist of two artificial neural networks (ANNs). These two ANNs compete to refine a synthetically generated concept until it cannot be distinguished from a naturally occurring one. This competition between the generator and the discriminator occurs in “rounds.” In each of these rounds, the generator takes random input and tries to shape it into the desired concept to such a degree that the discriminator cannot tell the difference between it and the ‘real thing’.

The core idea of the GANs is to train the generator network to such a degree that it produces a concept that is indistinguishable from real concepts, while the discriminator network is training to differentiate between the real and the generated. This process creates a “game” between the generator and the discriminator, leading to improved performance of both networks over time. Initially, the generator produces a poor-quality concept, but in each round, as it tries to fool the discriminator, it learns how to produce a better “fake.” On the opposite side, the discriminator gets better at detecting a fake. This process continues back and forth until the fake cannot be distinguished from the real. Conversely, the discriminator manipulates the real concept so that it can find the difference between it and the concept being created by the generator. For the warfighter, this allows the system to develop variations of known concepts that, while resembling familiar concepts, are substantially different.

Variational Autoencoders

Variational Autoencoders

Variational Autoencoders (VAE) are probabilistic generative models that require neural networks as a portion of their structure. They are somewhat similar to autoencoders whose purpose is to reduce the dimensionality of an input by combining or eliminating features of the inputted data. A VAE consists of an encoder and a decoder.

In VAEs, the neural network maps (encodes) its input to a sample space that has some degree of variational distribution between the input and some desired output. Because of this mapping, the (VAE) can create a new distribution from which new samples can be extracted. This process occurs by sampling from a simple, fixed distribution (e.g., Gaussian) and then transforming the sampled values using the encoder’s learned parameters (feature variations). This new, ‘learned’ distribution serves as the basis for the decoder to generate new data points. These mechanisms allow the system to deal with the ‘noise’ of environmental inputs and thus the warfighter can present the same information in different ways.

Autonomous Learning and World Model Construction

Autonomous Learning and World Model Construction

Another significant aspect of cognitive intelligence is autonomous learning. In autonomous learning, a cognitive intelligence can develop additional knowledge or sets of actions without being explicitly programmed to do so. This allows the CIGI to learn from its environment. For the warfighter, this allows the system to have insight into the mission that would not have been known beforehand. Consequently, the system can adapt to unexpected changes in the OE as a mission proceeds.

In the context of CIGI, a World Model is a mathematical abstraction of the warfighter’s operational environment (OE) whose inputs (variables) represent the factors that govern the warfighter’s world. The fact that CIGI can build a World Model is what truly separates it as an advanced form of AI. The World Model building, by the CIGI, allows complete autonomous learning giving rise to emergent solutions that were outside the scope of the human programming that built the system. For the warfighter, this allows the system to be highly adaptive to changes in the OE with its intelligence being more than just an extension of its prior programming.

Advanced artificial intelligence systems like CIGI are a leap forward in the enhancement of the warfighter or warfighting system’s capabilities. CIGI can be applied everywhere AI is being applied. The result is a “higher-level” of “highly adaptive intelligence” that can be used in autonomous systems, threat analysis, logistic management, and decision support among others.

Stay up to date on Cutting-Edge AI Concepts with Sentient Digital

Stay up to date on Cutting-Edge AI Concepts with Sentient Digital

Sentient Digital proudly supports the warfighter, as well as our government and private sector clients, by constantly striving to develop and apply technology to serve their aims. An important aspect of that is keeping up with the advancements in artificial intelligence taking place every day, including innovations such as cognitive intelligence. If you are interested in following Sentient Digital’s work and learning more about these technological developments, visit our blog, which we continually update with relevant industry topics.

If you want to work in this field, applying your scientific expertise to bolster national defense and facilitate important government objectives, Sentient Digital could be the ideal organization for the next step in your career. If you bring the right combination of knowledge, skills, and passion to add to our team, check out our current job postings to see if we have the right role for you.